Have you ever imagined a world where you could interact with digital content in a more natural and intuitive way? Apple announced the release of the visionOS SDK for third-party developers through Xcode 15 Beta 2. The SDK offers an infinite canvas for developers to create spatial computing applications that leverage the power of Vision Pro and its unique capabilities. With the visionOS SDK, developers can design new application experiences that span productivity, design, gaming, and more.

In addition to this, Apple is opening developer labs in Cupertino, London, Munich, Shanghai, Singapore, and Tokyo next month, providing developers with hands-on experience testing their apps on Apple Vision Pro hardware and getting support from Apple engineers. Developers can also apply for the Developer Kit, which will be released next month, to help them quickly build, iterate, and test their apps directly on Apple Vision Pro.

But what exactly is Vision Pro, and what can we expect from its visionOS software? Here’s Gizmoweek download Xcode 15 beta 2 experience.

Installing the visionOS simulator is straightforward, requiring only a valid Apple ID to access the download page. Once installed, developers can create a new simulator in Xcode, selecting visionOS 1.0 as the system. While Xcode doesn’t have specific recommended configurations, it’s essential to keep in mind the space requirements of the visionOS simulator, as well as its performance demands.

Vision Pro is a mixed reality device that emphasizes naturally overlaying application content in physical space. The device features circular icons, floating highlights, and 3D icons reminiscent of watchOS and tvOS. The main screen floats in front of you, facing your line of sight, and features app icons and two other functional pages accessible through a sidebar on the left.

Apple Vision Pro Experience: Bringing the Real World to Your Screen

One of the functional pages is Contacts, indicating that Apple views communication as one of the main application scenarios for Vision Pro. The other is the “Environment” menu, which theoretically allows users to immerse themselves in various simulated natural landscapes or lighting environments with one click, but it is not yet ready.

The visionOS Simulator

To get a glimpse of what Vision Pro has to offer, developers can use the visionOS simulator, which is provided as an optional download for Xcode 15 (beta 2 or later). The simulator allows developers to test their apps on the Vision Pro platform and get a feel for its unique capabilities.

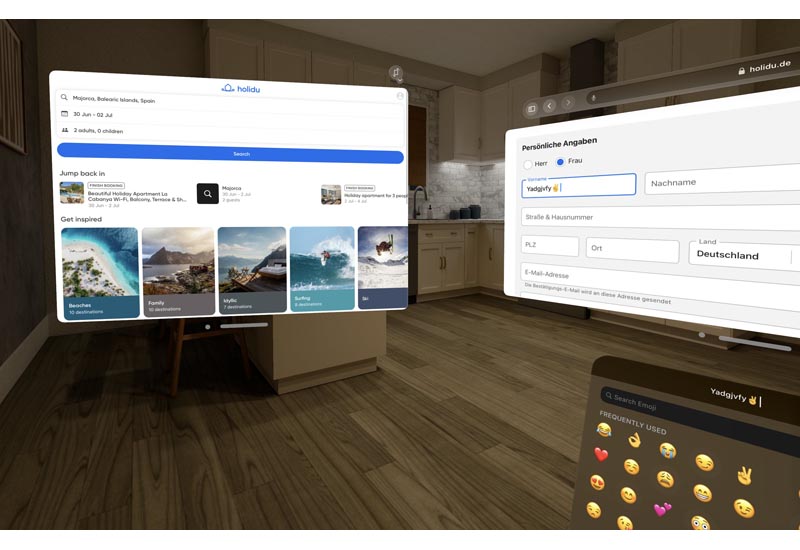

Upon entering the simulator, you are greeted with the main screen floating in front of you, with circular icons and floating highlights. The simulator also provides various scenes for testing, such as living rooms, kitchens, and museums, and each scene can be selected in either daylight or night lighting conditions.

However, since this is a simulator, all operations are completed through the cursor. Clicking is equivalent to selecting, long press and drag to adjust the angle, two-finger zoom on the touchpad to move forward and backward, and two-finger swipe to pan the view (if there is no touchpad, you need to switch the camera control mode through the menu in the lower right corner of the simulator).

In visionOS, each window has two controls at the bottom, one to close the window and the other to adjust the window position. The placement adjustment range is 360 degrees, so you can place it in front of you, on both sides, behind you, on the ceiling, or on the floor as long as your neck agrees. If you focus on the lower left corner of the window, an arc will appear, and dragging it can adjust the window size. For iPadOS apps running in compatibility mode, there is also a switch direction button in the upper right corner that can change the app’s horizontal or vertical layout mode.

In the middle of the field of view, slightly above, you can always see a small blue dot, which is the control menu in visionOS. Clicking on it reveals time, battery level, volume, and other information, as well as several buttons to display the main screen, set the brightness theme, open the control center, and notification center, among others.

With Apple’s Vision Pro and visionOS SDK, we are seeing the future of wearable devices. The ability to seamlessly integrate digital content with the physical world around us has the potential to change the way we interact with technology. From productivity to design to gaming, the possibilities for spatial computing applications are endless.

As a language model, I do not have access to Apple’s internal information or resources, and my responses are limited to publicly available information and my own programming. However, from what we have seen so far, the future looks bright for Apple’s Vision Pro and visionOS. We can’t wait to see what developers create with this new technology and how it will change the way we interact with the world around us.

Apple’s Vision Pro and visionOS SDK represent a significant step forward in the development of wearable devices. With the ability to seamlessly integrate digital content with the physical world, Vision Pro has the potential to change the way we interact with technology. The visionOS simulator provides developers with a glimpse of what’s to come, and we can’t wait to see what they create with this new technology.

If you’re a developer interested in creating spatial computing applications, be sure to check out the visionOS SDK and apply for the Developer Kit when it becomes available next month. And if you’re just a curious tech enthusiast like us, keep your eyes peeled for the release of Vision Pro and the exciting new applications that are sure to follow.

EDITOR PICKED:

Apple Vision Pro XR Headset Review: A Glimpse into the Future of XR?

MacBook Air 15-inch M2 review: Bigger Screen for Lightweight Laptop